2025-ART/INSTALLATION.

In good hands, Artificial Intelligence (AI) and Machine Learning (ML) can contribute helping society to adapt to the climate change, for example, developing systems more efficient reducing greenhouse gas emissions, by training wind farms, or improving the quality of solar forecasting for solar generators. On the other hand, the training of neural networks for analyzing “big data” requires the use of large infrastructures and enormous resources. Consequently, it involves substantial energy consumption directly proportional to the environmental cost.

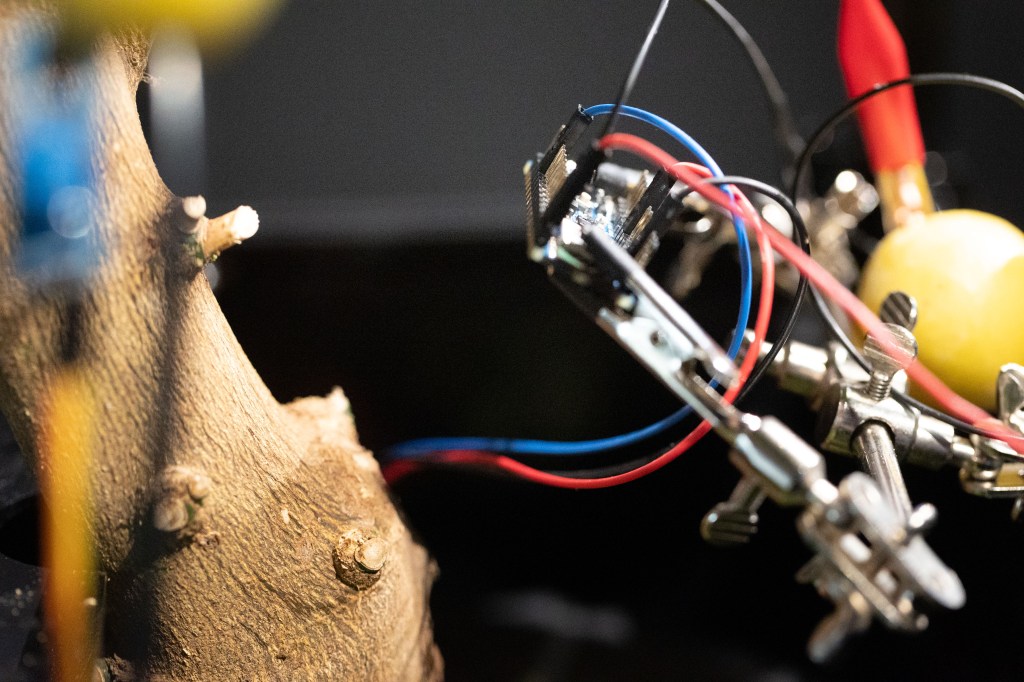

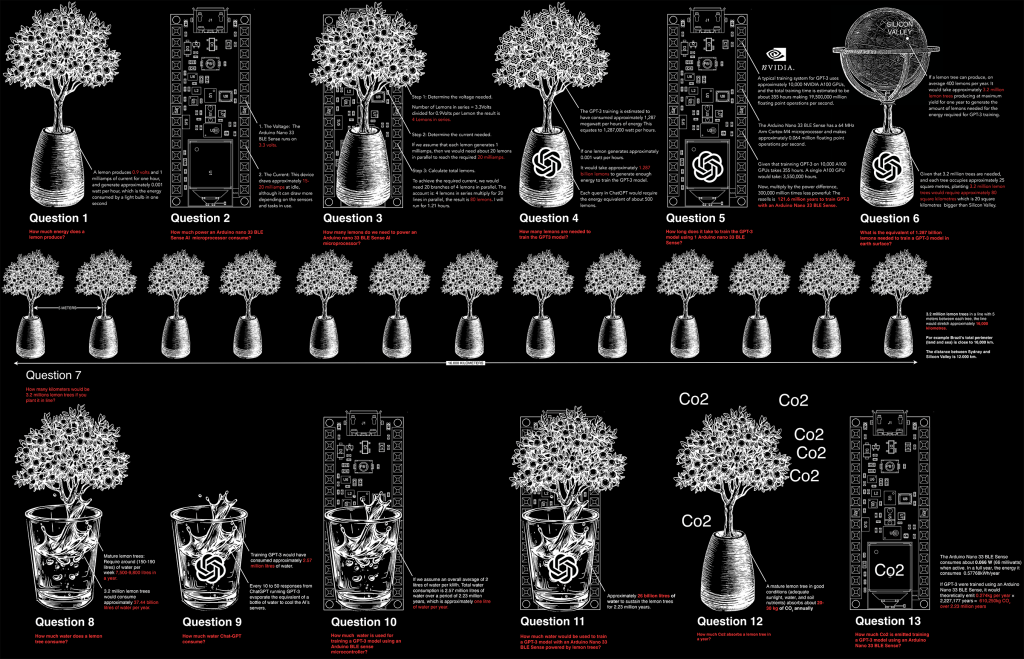

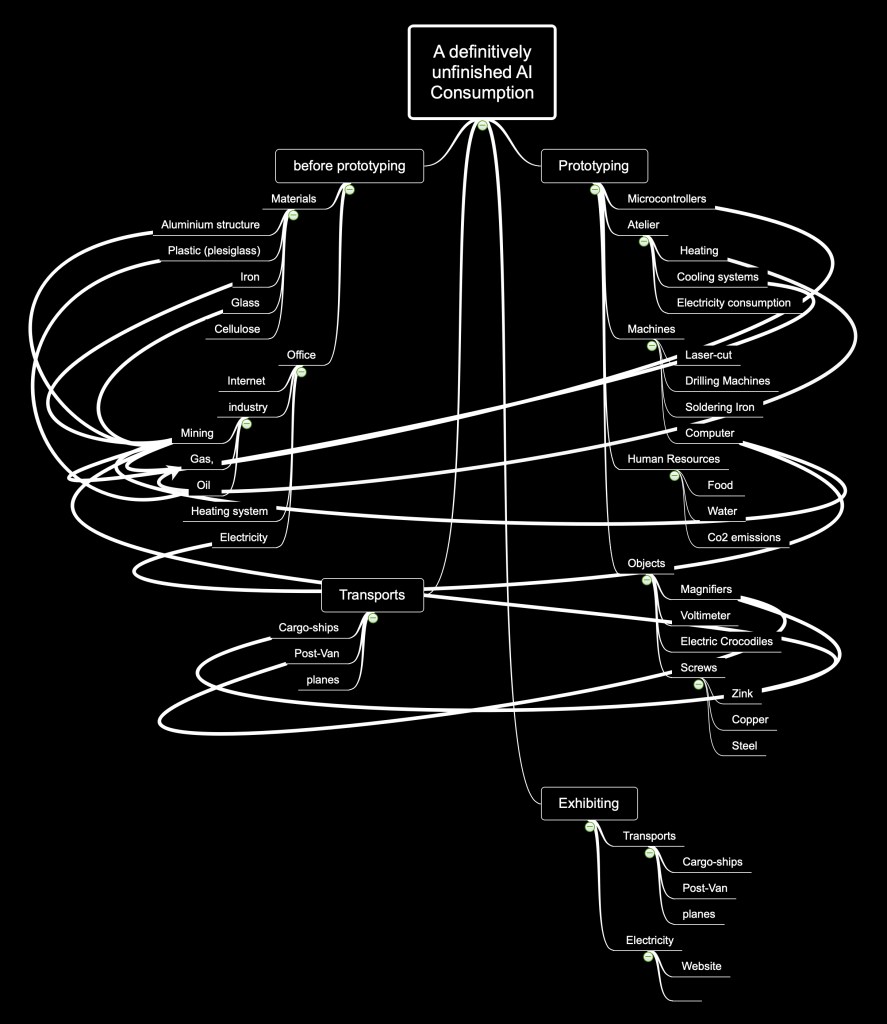

This paradigm is reflected in the artwork “A Definitely Unfinished AI”, –an installation that uses electricity produced by lemon trees to power an ultra-low power (ULP) AI micro-controller. The installation is designed to optimize energy consumption and keep a lemon tree alive. Unfortunately the resulting energy from the lemon tree is only capable of powering the micro-controller for no more than 0.3 seconds. “A Definitely Unfinished AI” is an artwork in progress, and constantly awaiting research funding to be implemented, while playing with the perplexity of watching the tree die.

Exhibitions:

2025-. “Conexiones lúcidas”, at Universitat Politècnica de València (Spain).

The art installation consists of TinyML microcontrollers, sensors that measure the temperature, humidity, luminosity and various energy-saving algorithms that combine the information collected by these sensors. Within a diagram it shows the energy used to create the artwork itself, as well as the materials and resources needed to make the artwork, but also the energy consumption in the AI training process.

Critical Training:

Artistic strategies to mitigate

Artificial Intelligence (AI) energy cost.

Paper and research by César Escudero Andaluz

Abstract

This paper focuses on the sustainable values of machine learning (ML), carbon emissions and electricity consumption produced by ML neural network training, and calls for efficient use of GPUs and cloud technology. It analyses specific researches and artworks capable of visualising, contextualising and criticise AI energy consumption, extracting the strategies developed by researchers and artists to unmask this invisible and black-boxed technology operating behind the human perception.

Finally, it encourages artists to develop artworks without the implications of these expensive technologies, but artworks capable of visualising the physical AI infrastructure hidden behind the graphical user interface (GUI).

Keywords

Sustainable AI, Neural Network Training, AI Power Consumption, Critical Art.

Introduction

Background: The term “Critical Mining” (Nadal, Escudero, 2017) was initially conceived to explore the consequences of networked computer electricity consumption, the waste of resources and Co2 emissions by the Bitcoin Blockchain miners. It analyses the technological, economical, ideological and ecological aspects of Bitcoin mining process, which it is illustrated with the critical art projects “Bittercoin, the worst miner ever”(2016) and “Bitcoin of Things (BoT)” (2017) by Martin Nadal and César Escudero. [1]

Machine Learning can contribute in helping society to adapt to the climate change, for example, developing systems more efficient, reducing greenhouse gas emissions, improving the ability to map and understand the size and value of underground deposits of oil and gas, by training wind and solar farms to make them more efficient generating electricity, orienting the turbine heads to capture a greater fraction of the incoming wind, or improving the quality of solar forecasting for solar generators.

On the other hand, training neural networks demand big infrastructures, computer power and significant energy consumption. Additionally, most of the platforms, infrastructures and algorithms are working to the benefice of a few tech companies. The impact that AI and ML have on society, economy and the environment is invisible and black-boxed to human perception. This is because most of the operations happening behind the Graphical User Interface (GUI) are not explained or displayed on the screen in a visual, alphanumeric language. There is no link, overview or indicator showing for example, how much electricity a neural network’s training process is consuming, or how much Co2 it is emitting.

In recent years, the use of GPUs for training neural networks and cloud computing have become increasingly available. It is the case of Google Cloud Platform, Microsoft Azure and Amazon Web Services. Additionally, AI platforms, real-time applications or AI-based websites require significant energy consumption that is used to train the models. e.g. Deep fakes apps, AI translators, filters or backgrounds are already a growing reality.

Nowadays, the lack of transparency is evident, there is no information available from any of those platforms about the insane energy expenditure linked to AI training models.

Society needs to bring this lack of transparency and common sense to the first line of discussion. This paper emphasise the continuing engagement of researchers and artists, their analysis, critiques and explorations to reflect AI technological environmental costs.

ENERGY CONSIDERATIONS OF ML: The double-edged sword.

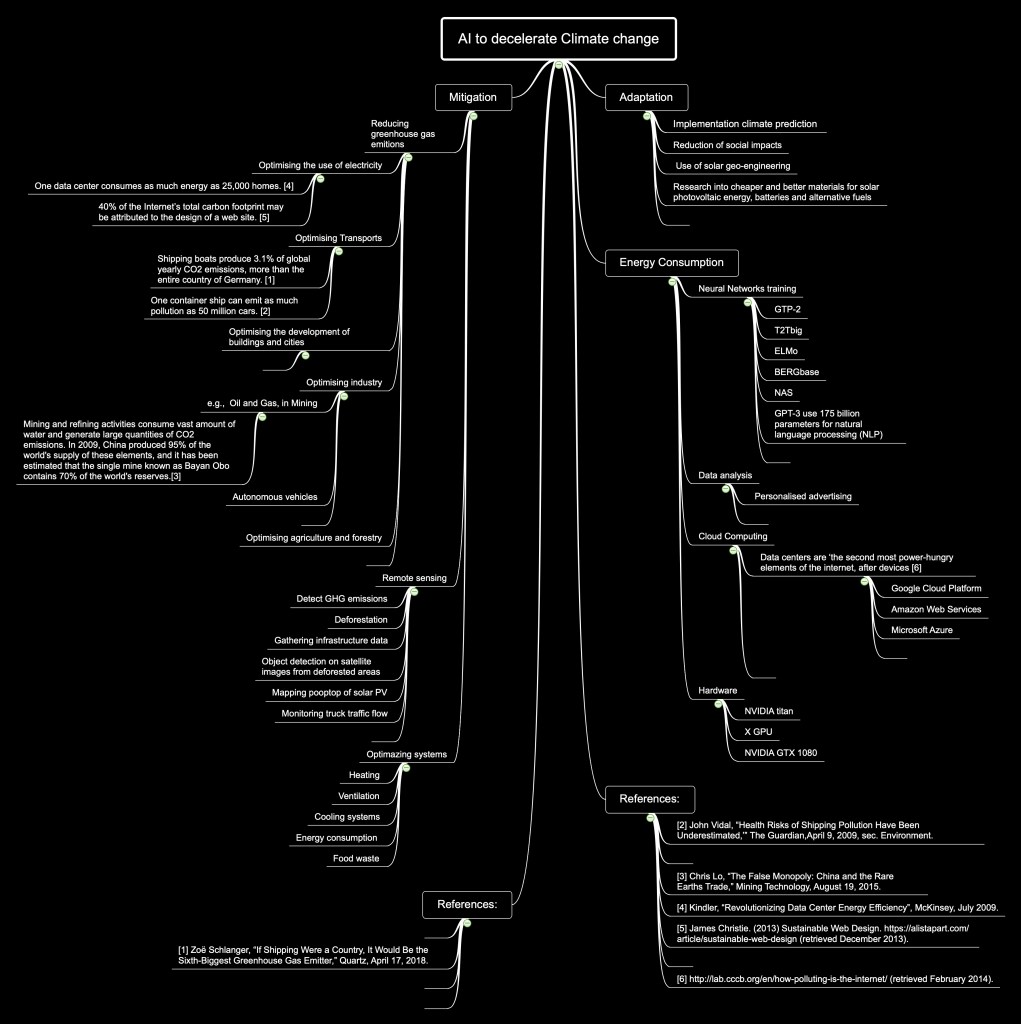

According to Lynn Kaack [2] integrant of the Energy Politics Group, at ETH Zurich and the organisation Climatechange.ai this situation can be described under three premises; mitigation, adaptation and energy consumption of AI.

First in terms of mitigation, she states that it is important to address the problem of climate change on time, as AI can help to reduce greenhouse gas emissions by optimising the use of electricity systems, transports, industry, or in the development of buildings and cities. These integrated assessment models combine climate science and socio-economic factors to understand the costs and benefits of different pathways, finding the lowest cost. These strategies focused on reducing consumption, through individual behaviour changes, for example by switching to other modes of transport, using more efficient vehicles, or switching from fossil fuels to battery electric vehicles.

Second in terms of adaptation, the strategy consists of an implementation of the resistance to the consequences of climate change; climate prediction, reduction of social impacts, and the use of solar geo-engineering. Kaack calls for an ML approach capable of foresees in the short and medium term the possibility of adaptation of the renewable energy sector. As well as the need for research into cheaper and better materials for solar photovoltaic energy, batteries and alternative fuels.

Finally in terms of energy consumption, it is a fact that AI models need computing power for training. For example, the OpenAI GPT-3 uses 175 billion parameters and the electricity demand for data centers is the 1% of the electricity consumption (IEA) worldwide. According to Kaack these developments must be monitored. She proposes to make industrial production more efficient (and cheaper), to work with AI not in an isolated way, but in automatic learning and climate action communities.

Co2 in neural networks training and data analysis

Training neural networks to analyse big data requires the use of large infrastructures and enormous resources. These models are expensive to train and develop, due to the cost of hardware, electricity and computing time in the cloud. Consequently, they require significant energy consumption directly proportional to the environmental cost. Although some of this energy can come from renewable sources, the high energy demand of these models remains a concern.

In the article entitled “Energy and Policy Considerations for Deep Learning in NLP”,[3] Emma Strubell, Ananya Ganesh and Andrew McCallum make a rough estimate of the carbon emissions, electricity and environmental costs of neural network training. Their method involved calculating the kilowatts of energy required to train a series of neurolinguistic programming (NLP) models. To do this, they calculated the total power consumption as the combined consumption of the GPU, CPU and dynamic random-access memory (DRAM), and then multiplied it by the power usage efficiency (PUE), taking into account the additional power required for cooling.

Their research concludes that training with Tensor Processing Units (TPUs) is more cost-effective than GPUs. Additionally, they make several recommendations to the scientific community, e.g., promote public access to information on the training time and computational resources needed to train a neural network and prioritise the development of more efficient hardware and algorithm models.

In the same line of research, the freelance writer Payal Dhar provides an important analysis of the role of artificial intelligence in climate change in her article “The carbon impact of artificial intelligence” [4]. Payal Dhar agrees with the previous authors that one of the main issues to be addressed to reduce the climate impact of AI is to quantify its energy consumption and carbon emissions, and indeed, to make this information transparent. Payal Dhar analyses several researches to find a common denominator. She is interested in Crawford and Joler’s art project “Anatomy of an AI” [5] due to its analysis of materials and costs of large-scale AI systems. Like the previous researchers she also concludes that part of the problem lies in the absence of a standard of measurement.

Moreover, she analysed the article “AI and Compute” developed by Dario Amodei and Danny Hernandez in the framework of OpenAI Research Lab, to reveal that, since 2012 the amount of AI training computation has increased exponentially and has grown more than 300,000 times compared to Moore’s Law. [21]

To conclude, the researcher Roy Schwartz and collaborators, in the article “Green AI” 2019, [6] state that there are three factors that need to be investigated and improved in IA: the cost of executing the model on a single example; the size of the training dataset, which controls the number of times the model is executed; and the number of hyperparameter experiments, which controls how many times the model is trained. Lastly, it is estimated that in 2025 the AI workload accounted for one tenth of the world’s electricity use.

Compute-Efficient: Low power microcontrollers, IoTs and mobile phones.

ML and Deep Learning have always been associated with large computers with CPUs and GPUs or running algorithms in the cloud. Today, the emergence of ultra-low-power (ULP) AI microcontrollers enable intelligent processing of localised data with the least amount of power, solving a large number of problems for which we do not have solutions right now. [7] These microcontrollers are basically everywhere, embedded in consumer devices, medical, automotive, industrial, toys, washing machines and remote controls.

Peter Warden, in his article “Why the Future of Machine Learning is Tiny”[8] argues that deep learning can be very energy efficient. It argues that a rough estimate of the energy consumed by a neural network can be made by knowing how many operations it needs to execute. For example, the MobileNetV2 image classification network, which requires 22 million operations [9] and if it takes 5 picojoules to execute a single operation, then it will need (5 picojoules * 22,000,000) = 110 microjoules of energy to execute it. That’s only 110 microjoules, which a coin battery could maintain continuously for almost a year.

Finally, the use of microcontrollers in ML means improvements in terms of privacy: normally, with machine learning, all raw data has to be sent to the cloud, which could contain sensitive or private information. In the case of microcontrollers, no data will have to leave the device [10].

References

[1] Nadal, Martín & Escudero, César. “Critical Mining: Blockchain and Bitcoin in Contemporary Art”. Artist Re:Thinking the Blockchain, 2017. Print.

[2] Rolnick, David. Priya L. Donti, Lynn H. Kaack, Kelly Kochanski, Alexandre Lacoste, Kris Sankaran, Andrew Slavin Ross, Nikola Milojevic-Dupont, Natasha Jaques, Anna Waldman-Brown, Alexandra Luccioni, Tegan Maharaj, Evan D. Sherwin, S. Karthik Mukkavilli, Konrad P. Ko ̈rding, Carla Gomes, Andrew Y. Ng, Demis Hassabis, John C. Platt, Felix Creutzig, Jennifer Chayes, Yoshua Bengio. “Tackling Climate Change with Machine Learning”

[3] Strubell, Emma. Ananya Ganesh, Andrew McCallum. “Energy and Policy Considerations for Deep Learning in NLP” College of Information and Computer Sciences University of Massachusetts Amherst

[4] Dhar, Payal. ”The carbon impact of artificial intelligence”https://www.nature.com/articles/s42256-020-0219-9

[5] Crawford, Kate and Vladan, Joler. “Anatomy of an AI” https://anatomyof.ai/index.html

[6] Schwartz, Roy and collaborators, “Green AI” 2019 https://arxiv.org/abs/1907.10597

[7] Analog Devices “ Ultra Low Power Microcontrollers” https://www.analog.com/en/products/processors-microcontrollers/microcontrollers/ultra-low-power-microcontrollers.html

[8] Warden, Peter. ”Why the Future of Machine Learning is Tiny”https://petewarden.com/2018/06/11/why-the-future-of-machine-learning-is-tiny/

[9] MobileNetV2, https://github.com/tensorflow/models/tree/master/research/slim/nets/mobilenet

[10] Seeds Studio, “Microcontrollers for Machine Learning and AI” https://www.seeedstudio.com/blog/2019/10/24/microcontrollers-for-machine-learning-and-ai/